Workshop organised by Andrea Farina (Department of Digital Humanities) and Francesca Lam-March (Department of Classics).

The Department of Digital Humanities at King’s College London is excited to announce a unique opportunity for scholars interested in the intersection of Classics and digital methodologies. We invite you to participate in our second workshop of the Data Driven Classics series, titled Data Driven Classics: Interdisciplinary Connections through Shared Data on 27th June 2025.

Webpage: https://www.kcl.ac.uk/events/data-driven-classics-interdisciplinary-connections-through-shared-data-workshop

Date: 27th June 2025

Time: 9:30 AM – 5:00 PM

Venue: King’s College London, Embankment Room MB-1.1.4 (Macadam Building, Strand Campus)

About the Workshop (more info on our webpage):

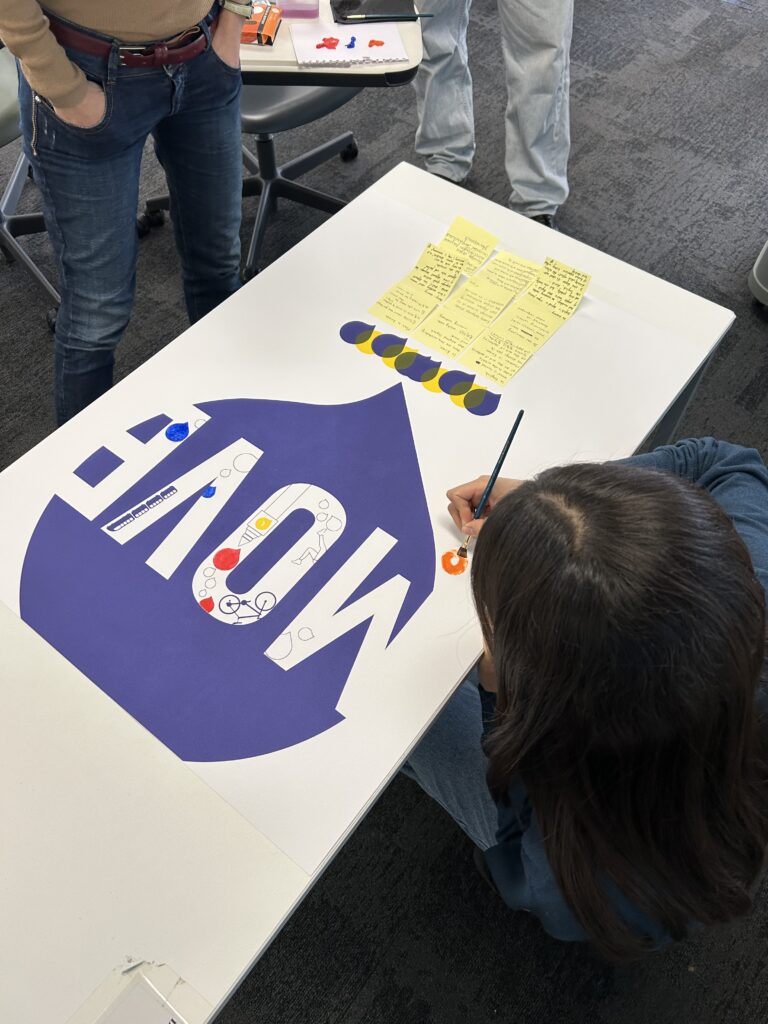

The workshop focuses on the role of curated datasets in advancing open research in Classics through digital humanities. It aims to enhance participants’ understanding of data-sharing, interoperability, and collaboration. The event includes expert presentations on published datasets, discussions on data use, and hands-on sessions where participants work with datasets, receive feedback, and explore best practices for sharing data. Topics covered include repository types, licensing, DOI assignment, and README files. The workshop concludes with a roundtable discussion on fostering further collaboration and data-sharing in the academic community.

Invited speakers:

Dr Marton Ribary (Royal Holloway University of London), Open humanities data: Creation, collection, research and access

Dr Mar A Rodda (Merton College Oxford), Classics through data: why is it difficult and why is it still worth it?

Dr Mathilde Bru (King’s College London), Datasets in Classics: sharing your research as a Classicist

Dr Gabriel Bodard (Institute of Classical Studies), Inscriptions, Prosopography, Linked Open Data: standards, connectivity and sustainability in recent digital classics projects

Who can attend:

This workshop is open to postgraduate students, researchers, and staff members interested in Classics, regardless of their level of expertise in digital methodologies. We especially encourage participation from those with an interest in linguistics, archaeology, history, and related fields. Participants are sought within and outside King’s College London. Preference will be given to applicants whose cover letters demonstrate that their research projects or professional pursuits benefit from the event. We also aim to maintain a balanced representation across disciplinary backgrounds.

No prior knowledge of computational or digital methods, or experience working with data or datasets, is required, as the workshop is designed to help attendees build these skills and apply them effectively in their research. Those with prior experience in either area are more than welcome to send us a cover letter for participation, as activities will be tailored to accommodate participants’ diverse backgrounds and levels of expertise.

Registration and logistics:

Seats for this workshop are limited. To apply for participation, please email Andrea Farina (andrea.farina@kcl.ac.uk) and Francesca Lam-March (francesca.1.lam-march@kcl.ac.uk), attaching a cover letter no longer than one page in .pdf format and writing “Data Driven Classics Registration” as the subject of your email. In your cover letter, please (1) state your name, affiliation, position (student, PhD student, Lecturer etc.), email address, and your field in Classics (e.g., linguistics, history, etc.), and (2) explain why you would like to attend the workshop and how it can benefit your research.

There is no registration fee for this event. However, participants are responsible for covering their travel expenses through their own institutions. The workshop will accommodate a maximum of 15 participants to ensure adequate assistance during the hands-on session.

Important dates:

Deadline to submit expression of interest with cover letter: 20 April 2025.

Notification of acceptance: 30 April 2025.

Event: 27 June 2025.

Contact Information:

For any inquiries or further information, please contact Andrea Farina (andrea.farina@kcl.ac.uk).