How do artists use the backend of machine learning in their artworks? How is Machine Learning transforming the making of meaning? These were the questions brought about in the Creative AI Lab’s presentation during the National College of Art and Design, Dublin (NCAD) Digital Culture Webinar Series hosted by Elaine Hoey (New Media Artist) and Dr. Rachel O’Dwyer (Lecturer in Digital Cultures, NCAD).

Titled ‘Inquiring the Backend of Machine Learning Artworks: Making Meaning by Calculation’, the Creative AI Lab’s Eva Jäger (Associate Curator of Serpentine Galleries Arts Technologies) and Mercedes Bunz (Senior Lecturer in Digital Society, KCL) presented their research (video here) into how artists use the backend of Machine Learning (ML) to develop their work. Their presentation was followed by Zac Ionnidis’, who spoke about Forensic Architecture’s use of AI to automate parts of human rights monitoring research.

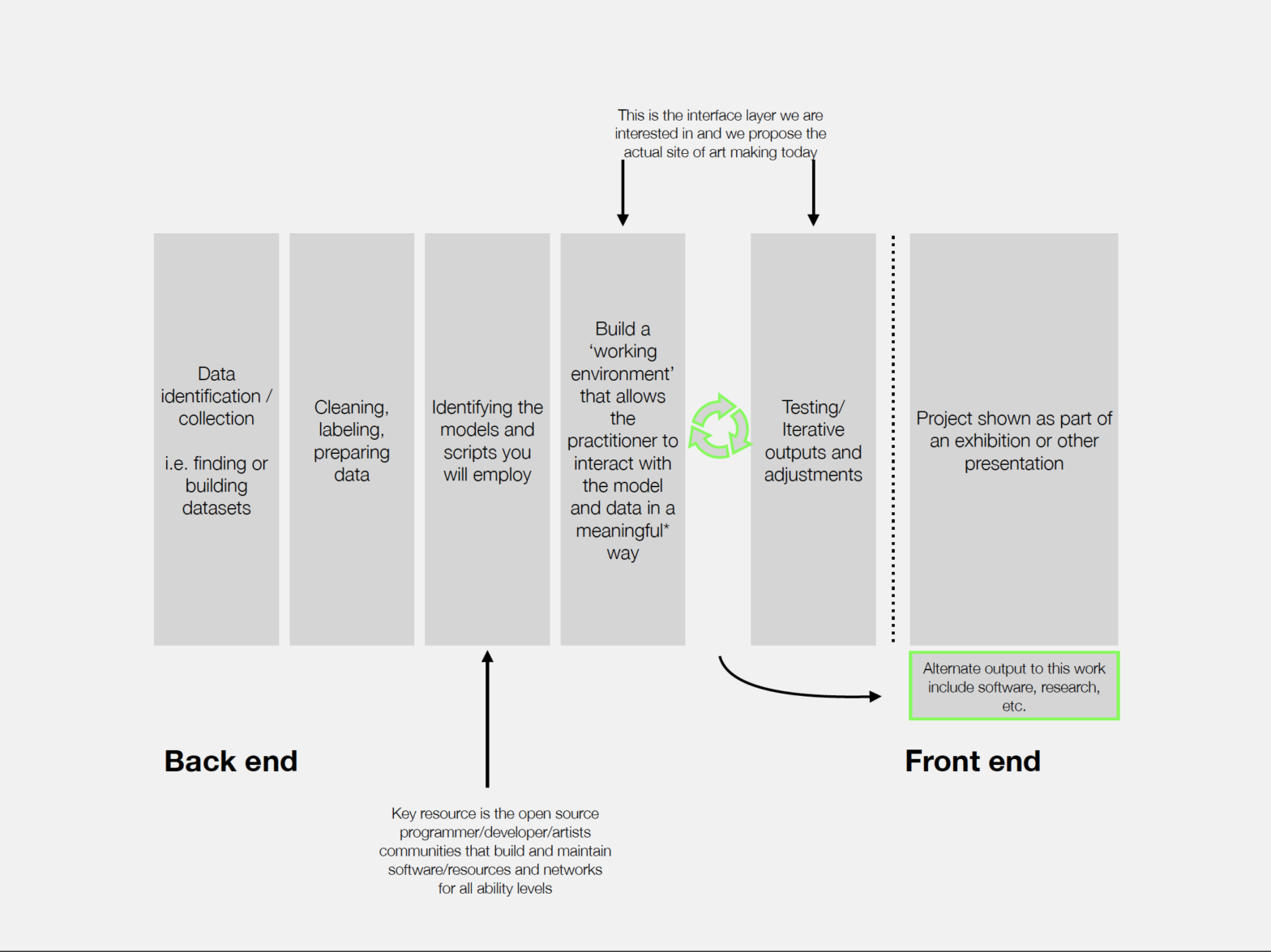

The research of the Creative AI Lab, a collaboration of the Department of Digital Humanities, KCL and the Serpentine Gallery, surfaced particular ways in which artists make use of machine learning in order to create models for engagement with these new technologies. Mercedes Bunz outlined some of the inspirations for the research, citing Zylinska’s book ‘AI Art: Machine Visions and Warped Dreams’ and Søren Pold’s ‘The Metainterface’. Further on, Eva Jäger explained why it was important to research the artistic usage of the back-end of ML. She elaborated on what constituted the back-end and said that this work often leads to an “alternate output which includes building or developing software and publishing/sharing research”.

Mercedes Bunz carried forward the presentation by arguing against the idea that humans are the only ones that can create meanings referring to work by Stuart Hall, who developed a more open approach when researching the encoding and decoding of meaning regarding television programs.

Next, she asked the question of how the materiality of ML is entangled in meaning making and if contemporary artworks using ML can help people to understand it better. To explain this, case studies of different artists were utilised. The case studies were done via virtual interviews with the artists and studio visits. The first case study was of Refik Anadol, whose work dramatises data through immersive environments and moving sculptures. Some of his works are supported by Nvidia, allowing him to access powerful computational processing power and not-yet released technology. His ‘Latent Space Visualiser’ (or T-SNE) allowed one to navigate a ‘data universe’.

https://twitter.com/refikanadol/status/1309013024221560836?s=20

The second artist, Adam Harvey, focuses on computer vision, privacy and surveillance. His most recent project V-Frame analyses video footage from documentations of human rights violations in cooperation with NGO Mnemonic. His work shows the inner workings of AI systems conceptually.

Weili Shi, the third artist, looks at digital media as a way for image generation. In his project TerraMars, he imagines alternate planets. His work makes technical steps in ML transparent, working with backends to create new visualisations.

Lastly, Allison Parrish’s work looks at phonetic similarities in poetry and creates an ML model that generates imaginary words. All of her work is open source and uses collaborative platforms, which is a way of collaborating through backends of ML.

Mercedes Bunz and Eva Jäger’s research shows how artists have been shifting ML usage in art from the notion of automation to collaboration. They conclude that machine learning does not replace artistic practice. A Question and Answer session succeeded both presentations, with the moderators and online audience asking questions on various topics including Open source, ethical standards artists should bear in mind while using AI and the ease of using ML technology.

Mercedes Bunz and Eva Jäger’s research regarding the back end of ML will be published in the future. Titled ‘Inquiring the Backend of Machine Learning Artworks: Making Meaning by Calculation’, the research has already been presented at the Art Machine 2 symposium on 13 June 2021.